Centos7 搭建k8s单master集群(版本1.20.1-0)

Centos7 搭建k8s单master集群(版本1.20.1-0)机器和环境注意准备机器环境修改服务器名添加路径映射关闭防火墙关闭selinux关闭swap开启 bridge-nf重启验证安装docker时间同步安装Kubernetes基本组件设置Kubernetes国内源安装相关组件安装Master节点创建集群安装Flannel网络插件安装Node环境变量配置Node节点加入Master报错处理节点状态NotReady排查

机器和环境

系统:Centos7.4 机器:

xxxxxxxxxx192.168.131.130 k8s-master192.168.131.141 k8s-node1192.168.131.142 k8s-node2

机器性能: k8s-master: 2核 4G k8s-node1: 4核 8G k8s-node2: 4核 8G 注意: cpu最低要2核,内存最低要2G,自己看机器配置用虚拟机划分资源

注意

以下操作不指明操作那台主机,就是所有机器都要执行一边

准备机器环境

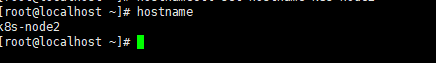

修改服务器名

k8s-master机器执行:hostnamectl set-hostname k8s-master

k8s-node1机器执行:hostnamectl set-hostname k8s-node1

k8s-node2机器执行:hostnamectl set-hostname k8s-node2

查询结果:hostname

添加路径映射

vi /etc/hosts向里面添加

xxxxxxxxxx```shell192.168.131.130 k8s-master192.168.131.141 k8s-node1192.168.131.142 k8s-node2```

注意:这个ip要根据自己电脑情况

检查:

ping k8s-masterping k8s-node1ping k8s-node2

关闭防火墙

xxxxxxxxxxsystemctl stop firewalld && systemctl disable firewalld关闭selinux

- 临时关闭

setenforce 0 - 永久关闭

修改配置/etc/selinux/config,将SELINUX设置为disabled

vi /etc/selinux/config

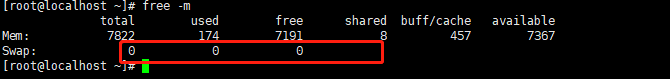

关闭swap

临时关闭

swapoff -a永久关闭

vi /etc/fstab- 修改/etc/fstab,注释最后一行

检查是否关闭 swap都是零则是swap关闭

开启 bridge-nf

修改vi /etc/sysctl.conf,末尾添加如下配置:

xxxxxxxxxxnet.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1net.bridge.bridge-nf-call-arptables = 1

重启验证

- 重启服务器:

reboot now - 防火墙是否关闭:

systemctl status firewalld - selinux是否关闭:

getenforce - swap是否关闭:

free -m

安装docker

- Docker安装博客

- 简化命令:

xxxxxxxxxxyum install docker -y && systemctl start docker && systemctl enable docker时间同步

- 安装时间同步:

yum install -y ntp ntpdate - 开启时间同步:

ntpdate cn.pool.ntp.org - 开启设置开机启动:

systemctl start ntpd && systemctl enable ntpd

安装Kubernetes基本组件

设置Kubernetes国内源

xxxxxxxxxxcat <<EOF > /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=1repo_gpgcheck=1gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOF安装相关组件

- 安装kubelet kubeadm kubectl

xxxxxxxxxxyum install -y kubelet-1.20.1-0 kubeadm-1.20.1-0 kubectl-1.20.1-0 --disableexcludes=kubernetes- 设置开机启动kubelet

systemctl start kubelet && systemctl enable kubelet

安装Master节点

注意:该部操作在k8s-master执行

创建集群

执行脚本

xxxxxxxxxxkubeadm init \--kubernetes-version=v1.20.1 \--pod-network-cidr=10.244.0.0/16 \--image-repository registry.aliyuncs.com/google_containers \--apiserver-advertise-address 192.168.131.130 \--v=6参数说明:

xxxxxxxxxxkubernetes-version:要安装的版本pod-network-cidr:负载容器的子网网段image-repository:指定镜像仓库(由于从阿里云拉镜像,解决了k8s.gcr.io镜像拉不下来的问题)apiserver-advertise-address:节点绑定的服务器ip(多网卡可以用这个参数指定ip)v=6:这个参数我还没具体查文档,用法是初始化过程显示详细内容,部署过程如果有问题看详细信息很多时候能找到问题所在有可能存在镜像拉不下来,这里可以查看需要镜像版本,可以考虑手动去拉取镜像,然后tag镜像

kubeadm config images list报错解决:

- 根据报错日志,解决报错

- 重置取消init:

kubeadm reset - 再次执行init

安装成功后

添加到环境变量里面

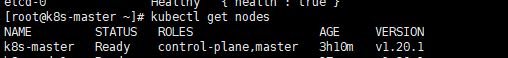

xxxxxxxxxxecho "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profilesource ~/.bash_profile检查状态:

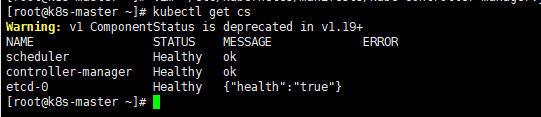

kubectl get csxxxxxxxxxx发现scheduler和controller-manager的状态为Unhealthy,一般是因为kube-scheduler和kube-controller-manager组件配置默认禁用了非安全端口,修改配置文件中的port=0配置,配置文件路径:vi /etc/kubernetes/manifests/kube-scheduler.yamlvi /etc/kubernetes/manifests/kube-controller-manager.yaml

重启kubelet:

systemctl restart kubelet再次检查状态:

kubectl get cs

安装Flannel网络插件

- 创建目录:

mkdir -p /opt/yaml - 创建yaml文件

xxxxxxxxxx```shellcd /opt/yaml && touch kube-flannel.yaml && vi kube-flannel.yaml```

讲下面内容粘贴到文件里面

xxxxxxxxxx---apiVersionpolicy/v1beta1kindPodSecurityPolicymetadatanamepsp.flannel.unprivilegedannotationsseccomp.security.alpha.kubernetes.io/allowedProfileNamesdocker/defaultseccomp.security.alpha.kubernetes.io/defaultProfileNamedocker/defaultapparmor.security.beta.kubernetes.io/allowedProfileNamesruntime/defaultapparmor.security.beta.kubernetes.io/defaultProfileNameruntime/defaultspecprivilegedfalsevolumesconfigMapsecretemptyDirhostPathallowedHostPathspathPrefix"/etc/cni/net.d"pathPrefix"/etc/kube-flannel"pathPrefix"/run/flannel"readOnlyRootFilesystemfalse# Users and groupsrunAsUserruleRunAsAnysupplementalGroupsruleRunAsAnyfsGroupruleRunAsAny# Privilege EscalationallowPrivilegeEscalationfalsedefaultAllowPrivilegeEscalationfalse# CapabilitiesallowedCapabilities'NET_ADMIN' 'NET_RAW'defaultAddCapabilitiesrequiredDropCapabilities# Host namespaceshostPIDfalsehostIPCfalsehostNetworktruehostPortsmin0max65535# SELinuxseLinux# SELinux is unused in CaaSPrule'RunAsAny'---kindClusterRoleapiVersionrbac.authorization.k8s.io/v1metadatanameflannelrulesapiGroups'extensions'resources'podsecuritypolicies'verbs'use'resourceNames'psp.flannel.unprivileged'apiGroups""resourcespodsverbsgetapiGroups""resourcesnodesverbslistwatchapiGroups""resourcesnodes/statusverbspatch---kindClusterRoleBindingapiVersionrbac.authorization.k8s.io/v1metadatanameflannelroleRefapiGrouprbac.authorization.k8s.iokindClusterRolenameflannelsubjectskindServiceAccountnameflannelnamespacekube-system---apiVersionv1kindServiceAccountmetadatanameflannelnamespacekube-system---kindConfigMapapiVersionv1metadatanamekube-flannel-cfgnamespacekube-systemlabelstiernodeappflanneldatacni-conf.json{"name": "cbr0","cniVersion": "0.3.1","plugins": [{"type": "flannel","delegate": {"hairpinMode": true,"isDefaultGateway": true}},{"type": "portmap","capabilities": {"portMappings": true}}]}net-conf.json{"Network": "10.244.0.0/16","Backend": {"Type": "vxlan"}}---apiVersionapps/v1kindDaemonSetmetadatanamekube-flannel-dsnamespacekube-systemlabelstiernodeappflannelspecselectormatchLabelsappflanneltemplatemetadatalabelstiernodeappflannelspecaffinitynodeAffinityrequiredDuringSchedulingIgnoredDuringExecutionnodeSelectorTermsmatchExpressionskeykubernetes.io/osoperatorInvalueslinuxhostNetworktruepriorityClassNamesystem-node-criticaltolerationsoperatorExistseffectNoScheduleserviceAccountNameflannelinitContainersnameinstall-cniimagequay.io/coreos/flannelv0.13.1-rc1commandcpargs-f/etc/kube-flannel/cni-conf.json/etc/cni/net.d/10-flannel.conflistvolumeMountsnamecnimountPath/etc/cni/net.dnameflannel-cfgmountPath/etc/kube-flannel/containersnamekube-flannelimagequay.io/coreos/flannelv0.13.1-rc1command/opt/bin/flanneldargs--ip-masq--kube-subnet-mgrresourcesrequestscpu"100m"memory"50Mi"limitscpu"100m"memory"50Mi"securityContextprivilegedfalsecapabilitiesadd"NET_ADMIN" "NET_RAW"envnamePOD_NAMEvalueFromfieldReffieldPathmetadata.namenamePOD_NAMESPACEvalueFromfieldReffieldPathmetadata.namespacevolumeMountsnamerunmountPath/run/flannelnameflannel-cfgmountPath/etc/kube-flannel/volumesnamerunhostPathpath/run/flannelnamecnihostPathpath/etc/cni/net.dnameflannel-cfgconfigMapnamekube-flannel-cfg将quay.io换成quay.mirrors.ustc.edu.cn(中科大)的镜像

xxxxxxxxxxsed -i 's#quay.io/coreos/flannel#quay.mirrors.ustc.edu.cn/coreos/flannel#' /opt/yaml/kube-flannel.yaml执行安装命令

xxxxxxxxxx```shellkubectl apply -f /opt/yaml/kube-flannel.yaml```

- 查看节点状态:

kubectl get nodes

安装Node

注意: 该步操作只在node节点执行

环境变量配置

- 配置文件从master拷贝到node,注意:在master节点执行

xxxxxxxxxx```shellscp /etc/kubernetes/admin.conf root@k8s-node1://etc/kubernetes/scp /etc/kubernetes/admin.conf root@k8s-node2://etc/kubernetes/```

给node节点添加环境,注意: 在node节点执行

xxxxxxxxxxecho "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profilesource ~/.bash_profile

Node节点加入Master

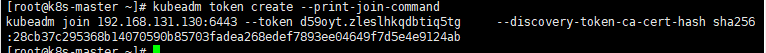

因为修改过一些配置,重启过master,这里为了防止报错,我们重新生成加入token,在master机器执行:

kubeadm token create --print-join-command

在node节点执行:

xxxxxxxxxxkubeadm join 192.168.131.130:6443 --token d59oyt.zleslhkqdbtiq5tg --discovery-token-ca-cert-hash sha256:28cb37c295368b14070590b85703fadea268edef7893ee04649f7d5e4e9124ab --v=6注意结尾有个 –v=6 要自己手动加上去

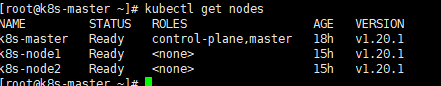

在master执行:

kubectl get nodes

报错处理

节点状态NotReady排查

可能是某个docker镜像没起来

执行:

kubectl get pods -o wide --all-namespaces

- 找到0/1类型:

docker logs 容器id, 看看为什么起不来

- 找到0/1类型: